5. Experiments and Evaluation

코넬 파지 데이터셋[19]은 240종의 물체 885개의 이미지를 담고 있다. 그리고 그라운드트러스 파지 레이블을 갖고 있다.

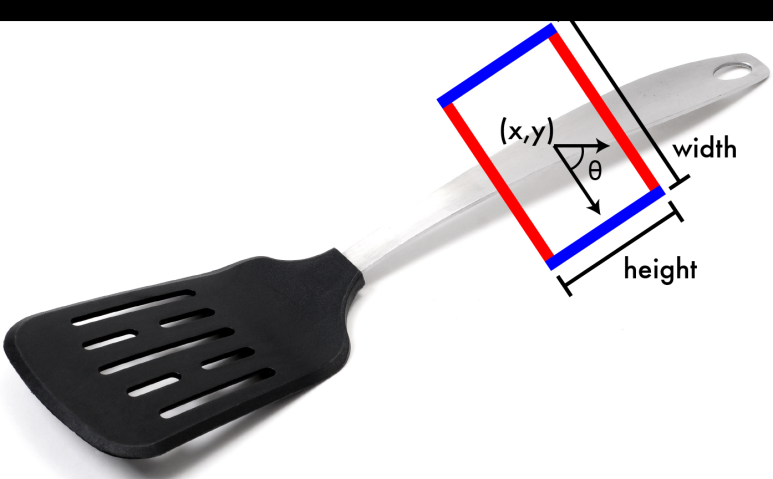

- rectangle metric accuracy

6. 결과

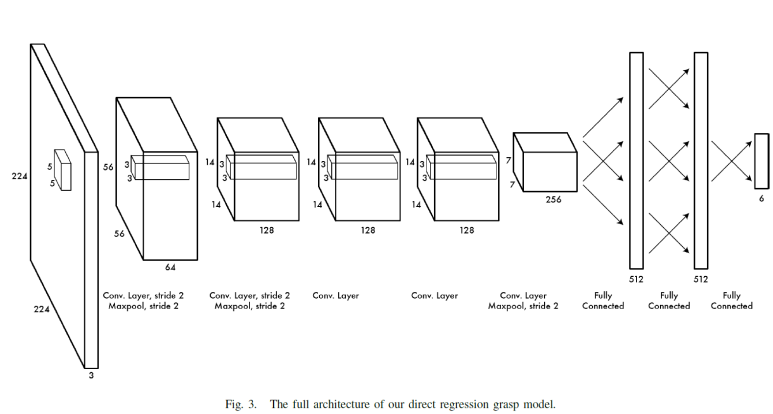

- direct regression model

7. Discussion

- Image dataset에서 미리 학습된 효과가 미치는 영향

- RGB정보에서 B대신 Depth정보를 사용하는 효과

8. 결론

Grasping detection과 object classification이 정확도를 유지하면서 결합될 수 있음을 보여줌.

참고문헌

[1] I. Lenz, H. Lee, and A. Saxena, “Deep learning for detecting robotic grasps,” in Proceedings of Robotics: Science and Systems, Berlin, Germany, June 2013.

[2] Y. Jiang, S. Moseson, and A. Saxena, “Efficient grasping from rgbd images: Learning using a new rectangle representation,” in IEEE International Conference on Robotics & Automation (ICRA). IEEE, 2011, pp. 3304–3311.

[3] A. Bicchi and V. Kumar, “Robotic grasping and contact: A review,” in IEEE International Conference on Robotics & Automation (ICRA). Citeseer, 2000, pp. 348–353.

[4] A. T. Miller, S. Knoop, H. I. Christensen, and P. K. Allen, “Automatic grasp planning using shape primitives,” in IEEE International Conference on Robotics & Automation (ICRA), vol. 2. IEEE, 2003, pp. 1824–1829.

[5] A. T. Miller and P. K. Allen, “Graspit! a versatile simulator for robotic grasping,” Robotics & Automation Magazine, IEEE, vol. 11, no. 4, pp. 110–122, 2004.

[6] R. Pelossof, A. Miller, P. Allen, and T. Jebara, “An svm learning approach to robotic grasping,” in IEEE International Conference on Robotics & Automation (ICRA), vol. 4. IEEE, 2004, pp. 3512–3518.

[7] B. Leon, S. Ulbrich, R. Diankov, G. Puche, M. Przybylski, A. Morales, ´ T. Asfour, S. Moisio, J. Bohg, J. Kuffner, et al., “Opengrasp: a toolkit for robot grasping simulation,” in Simulation, Modeling, and Programming for Autonomous Robots. Springer, 2010, pp. 109–120.

[8] K. Lai, L. Bo, X. Ren, and D. Fox, “A large-scale hierarchical multi-view rgb-d object dataset,” in IEEE International Conference on Robotics & Automation (ICRA). IEEE, 2011, pp. 1817–1824.

[9] ——, “Detection-based object labeling in 3d scenes,” in IEEE International Conference on Robotics & Automation (ICRA). IEEE, 2012, pp. 1330–1337.

[10] M. Blum, J. T. Springenberg, J. Wulfing, and M. Riedmiller, “A learned feature descriptor for object recognition in rgb-d data,” in IEEE International Conference on Robotics & Automation (ICRA). IEEE, 2012, pp. 1298–1303.

[11] P. Henry, M. Krainin, E. Herbst, X. Ren, and D. Fox, “Rgb-d mapping: Using depth cameras for dense 3d modeling of indoor environments,” in In the 12th International Symposium on Experimental Robotics (ISER). Citeseer, 2010.

[12] F. Endres, J. Hess, N. Engelhard, J. Sturm, D. Cremers, and W. Burgard, “An evaluation of the rgb-d slam system,” in IEEE International Conference on Robotics & Automation (ICRA). IEEE, 2012, pp. 1691–1696

[13] A. Saxena, J. Driemeyer, and A. Y. Ng, “Robotic grasping of novel

objects using vision,” The International Journal of Robotics Research,

vol. 27, no. 2, pp. 157–173, 2008.

[15] A. Krizhevsky, I. Sutskever, and G. E. Hinton, “Imagenet classification

with deep convolutional neural networks,” in Advances in neural

information processing systems, 2012, pp. 1097–1105.

[19] “Cornell grasping dataset,” http://pr.cs.cornell.edu/grasping/rect data/

data.php, accessed: 2013-09-01.

1713-policy-gradient-methods-for-reinforcement-learning-with-function-approximation.pdf

1713-policy-gradient-methods-for-reinforcement-learning-with-function-approximation.pdf